Shannon Vallor is worried about killer robots. But not for the reasons you might think.

The philosopher does not think a new race of armed, self-aware machines will be taking over the world. Not any time soon, anyway. Probably not ever.

But Vallor – a leading American scholar of the ethics of data and artificial intelligence shortly to flit to Edinburgh University – reckons we should be concerned with military robots.

Why? Because of the people who may control them.

Science fiction writers have fretted for decades about the moral philosophy of smart robots. What, at least in popular culture, we have not done so much is think about the ethics of dumb humans who will suddenly have control of vast amounts of artificial intelligence. That is where thinkers like Vallor come in.

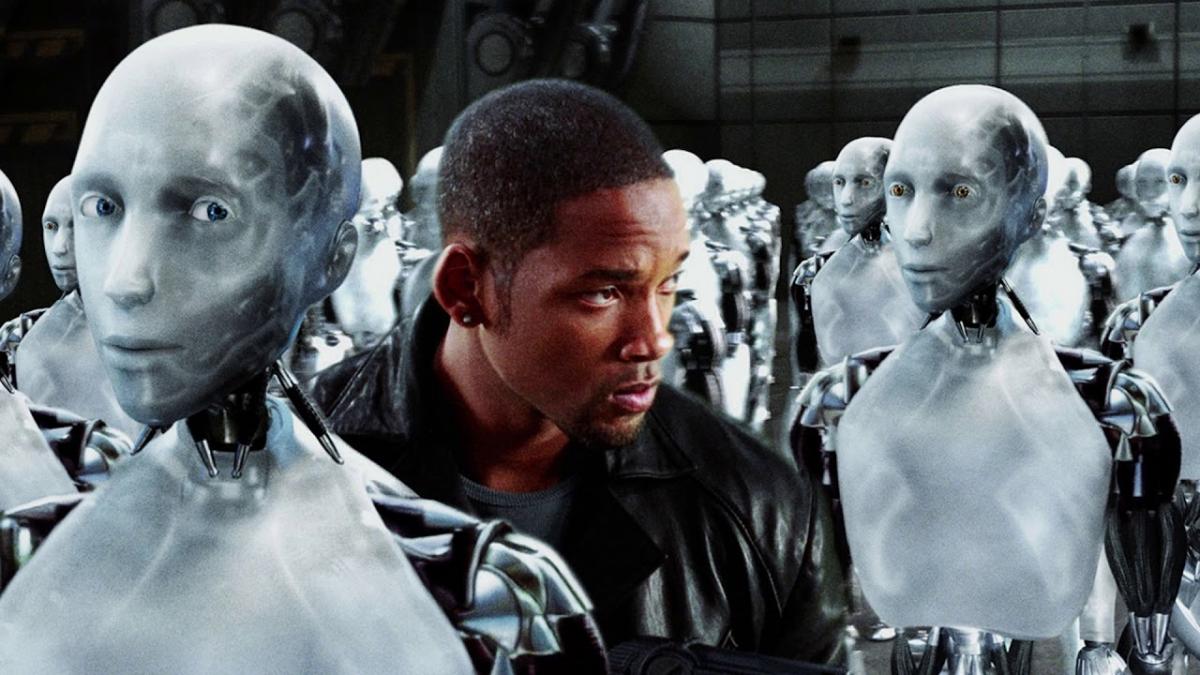

So take robot ethics. Most people know the three laws of fantasy and popular science writer Isaac Asimov, the American behind I, Robot:

1 A robot may not injure a human being or, through inaction, allow a human being to come to harm.

2 A robot must obey orders given it by human beings except where such orders would conflict with the First Law.

3 A robot must protect its own existence as long as such protection does not conflict with the First or Second Law.

But what are the laws for human robot handlers? And, perhaps more prosaically, what are the ethical guidelines for those flesh-and-blood controllers of vast quantities to data, manipulated using machine learning?

Vallor, speaking from Santa Clara in California, by phone, want us all to get thinking about these issues.

Asked if she and other AI ethicists worry about killer robots, she says: “We do not.”

And then, after a pause, she adds: “Well, actually, we do need to worry about the use of robots by people to kill people by people.

“But we do not need to worry about robots who make up their own minds to kill people. Or AI that becomes more intelligent broadly speaking than people. It is not that these things are impossible. But they are nowhere on the technical horizon at the moment.”

Prof Vallor admits she and colleagues do chat about such things in the pub. “We may debate what we call artificial general intelligence," she said. Do you think that is going to come in 100 years, 500 years, ever? “We might get in to some philosophical speculation. But it is not what we worry about on ethical grounds.

“I encounter a number of people who have listened to the voices of people like Elon Musk or the late Stephen Hawking that artificial intelligence represents a competitor to humanity, something that will develop its own will or its own goal, that it become malicious or that it could turn against humanity. This is purely science fiction.”

She continued: “There is a lot of hype and misconceptions around data science and artificial intelligence and a lot of people fear the wrong things about these technologies.

“So a making sure people – even regulators and legislators – have the right understanding of what these technologies are, of how these tools work, is a major focus area for me.”

The big issue? The abuse of technology, the abuse of data. And unthinking stupidity with them both that has horrible unintended consequences.

A good example of the latter: cars that drive themselves. Autonomous vehicles are on the cusp of being an everyday reality. There are horror stories of their being abused, of bad actors using the internet to take them over, drive them in to crowds – or banks.

Vallor has a slightly different perspective. She is not dismissing safety questions but is also thinking about long-term implications for the workforce, for the environment.

She explained: “One set of projections has been that self-driving cars will allow driving to be more efficient and less wasteful, that you won’t need as many cars on the road.

“But there is also some evidence that it could work the other way. In places where ride-sharing vehicles like Uber or Lyft are very easily accessible and relatively affordable we have seen an increase in overall miles driven and trips taken. So the question is: if the technology becomes much safer and cheaper and easier because you don't even have to drive yourself, you don’t have to find designated driver when you go the pub, you have a car that takes care of all these things for you. Will people drive even more than before?”

Will we all get fat if we are chauffeured around in robocars? “Sure,” said Vallor. “We have seen this is cities build around the automobile. Should self-driving cars replace other modes of transit like trains which arguable from a sustainability standpoint might be preferable?”

Then there are other common-or-garden robots we already meet every day. At the supermarket check-out. At the bank.

Do they take our jobs? Or do they free us up to do better jobs? Do they rob of us of human contact? Or do we have to focus more on interactions which are meaningful, with friends or family.

Of course, all those machines are collecting your data. Even reading this article – if you are doing so online – is a measurable piece of personal information. Regulators and legislators at all levels of government, not least the European Union, are trying to put in place laws for how these data are managed.

Vallor’s new job is designed to inform how that is done. And more. Edinburgh University’s Edinburgh Futures Institute, to be based in the old Royal Infirmary starting next year, is designed to bring together different disciplines. It is where humanities meet IT and robotics, just for starters.

Vallor explained. “One of the obstacles right now to developing these technologies in responsible and ethical ways is that you have a lot of technical expertise and is often not shared by people outside the engineering and computer science fields."

She continued: “You have regulators who don’t really understand the technology and people implementing these tools in their businesses do not know how these tools work. The public may not understand.

“On the other hand, within the technical communities they may not have the understanding of social sciences, the historical perspective, the philosophical and value perspectives.

“They may be so focused on the technical aspects that the potential unintended and undesirable consequences of a particular design choice may never occur to them.”

This is not a purely academic concern. The Cambridge Analytica scandal exposes efforts to manipulate the electorate. The current authoritarian government in Russia has sought to use social media – not least non-lethal Twitter ‘bots’ of a kind which never even haunted Azimov’s dreams – to influence politics around the world. Its efforts have now been replicated by other state actors, according to recent research.

Robots – even Twitter robots – may not have ethics. People do. So Vallor wants to see data scientists, computer engineers work to the same kind of moral – and regulatory — guidelines as doctors. After all, your data is as personal as your flesh and bones.

She said: “One of the primary concerns about data is that it can be used for both liberatory purposes and oppressive purposes. It can be used to inform and it can be used to deceive and obscure for political purposes. It makes an enormous difference which of those trends is dominant. So I am very interested in thinking through how we can make sure that data science develops in a way that favors transparency and reduces disinformation, and oppressive social control, which for some political actors is a very attractive path.”

Vallor does not think there is a “silver bullet” to making data safe but she does think we need to drop the idea that personal information is something we can mine from the internet in the way we dig up coal or iron.

She said: “We need to get away from the model of 'data is the new oil'. This is a really problematic metaphor.

“We have to keep in mind that data are the reflections of people, of people’s lives, not disembodied things.

“We have to treat data with the kind of moral care that is appropriate for something so intimate and personal.

“When we look at data we must remember that we are also looking at people. And when we manipulate data we think about the fact that we could be manipulating people.”

But it is not just data or robotics scientists who need ethical and regulatory standards. So do other professions using the product of AI or data engineering.

Valor said: “For any of these disciplines we need to think about what are the internal professional standards we want to develop and whether there are external guardrails we need to set up to make sure that happens.

“We now see that all professionals – in medicine, law, journalism, the military – are now running through data pipelines. It is the foundation of everything else we care about.”

Azimov’s I, Robot and a hundred other movies and books have helped cement a certain kind of concept of progress, as something fundamentally technical. Vallor does not buy this.

She wants us to reimagine what progress is. “Does it make the world happy, a more just place, a more sustainable place?" she asked. “Does it make a place where life can go on in ways that are as good or better than the ways they have gone on before?”

Why are you making commenting on The Herald only available to subscribers?

It should have been a safe space for informed debate, somewhere for readers to discuss issues around the biggest stories of the day, but all too often the below the line comments on most websites have become bogged down by off-topic discussions and abuse.

heraldscotland.com is tackling this problem by allowing only subscribers to comment.

We are doing this to improve the experience for our loyal readers and we believe it will reduce the ability of trolls and troublemakers, who occasionally find their way onto our site, to abuse our journalists and readers. We also hope it will help the comments section fulfil its promise as a part of Scotland's conversation with itself.

We are lucky at The Herald. We are read by an informed, educated readership who can add their knowledge and insights to our stories.

That is invaluable.

We are making the subscriber-only change to support our valued readers, who tell us they don't want the site cluttered up with irrelevant comments, untruths and abuse.

In the past, the journalist’s job was to collect and distribute information to the audience. Technology means that readers can shape a discussion. We look forward to hearing from you on heraldscotland.com

Comments & Moderation

Readers’ comments: You are personally liable for the content of any comments you upload to this website, so please act responsibly. We do not pre-moderate or monitor readers’ comments appearing on our websites, but we do post-moderate in response to complaints we receive or otherwise when a potential problem comes to our attention. You can make a complaint by using the ‘report this post’ link . We may then apply our discretion under the user terms to amend or delete comments.

Post moderation is undertaken full-time 9am-6pm on weekdays, and on a part-time basis outwith those hours.

Read the rules here