You may be reading this on a computer, a tablet or phone. You almost certainly do your banking online, and much of your shopping. Information on any subject you choose is available with a few keystrokes. You watch films, comedies and series either in catch-up or increasingly by one of the recent providers. You don’t send letters anymore – and why should you? – when you can communicate immediately with friends and family.

The downside of the internet, of course, is that you are more open to online fraudsters, children can be exposed to hardcore pornography, more and more advertising has gone digital to the severe detriment of the paper version of this article, and tens of thousands of jobs have perished, in shops, banks, Royal Mail and, of course, newspapers.

It might be called a digital Pandora’s box. In Greek mythology a jar was opened and sickness, death and evil were set loose, only for Pandora to shut the lid and imprison hope.But, in August 1991, hope was propelling the World Wide Web when it first appeared, hope that society could be changed through it, education brought to the farthest and poorest reaches of the planet, and that simultaneous communication was possible and that all would be equal in it.

And it did what it said and more. It also made some people fabulously wealthy beyond the ambition of any mythical Croesus, like Jeff Bezos, creator of Amazon and the richest man in the world, or Bill Gates of Microsoft, Facebook’s Mark Zuckerberg and Elon Musk. And it allowed hackers to meddle with elections and Cambridge Analytica to use data handed to it by Facebook to target 80 million voters in Donald Trump’s 2016 election campaign

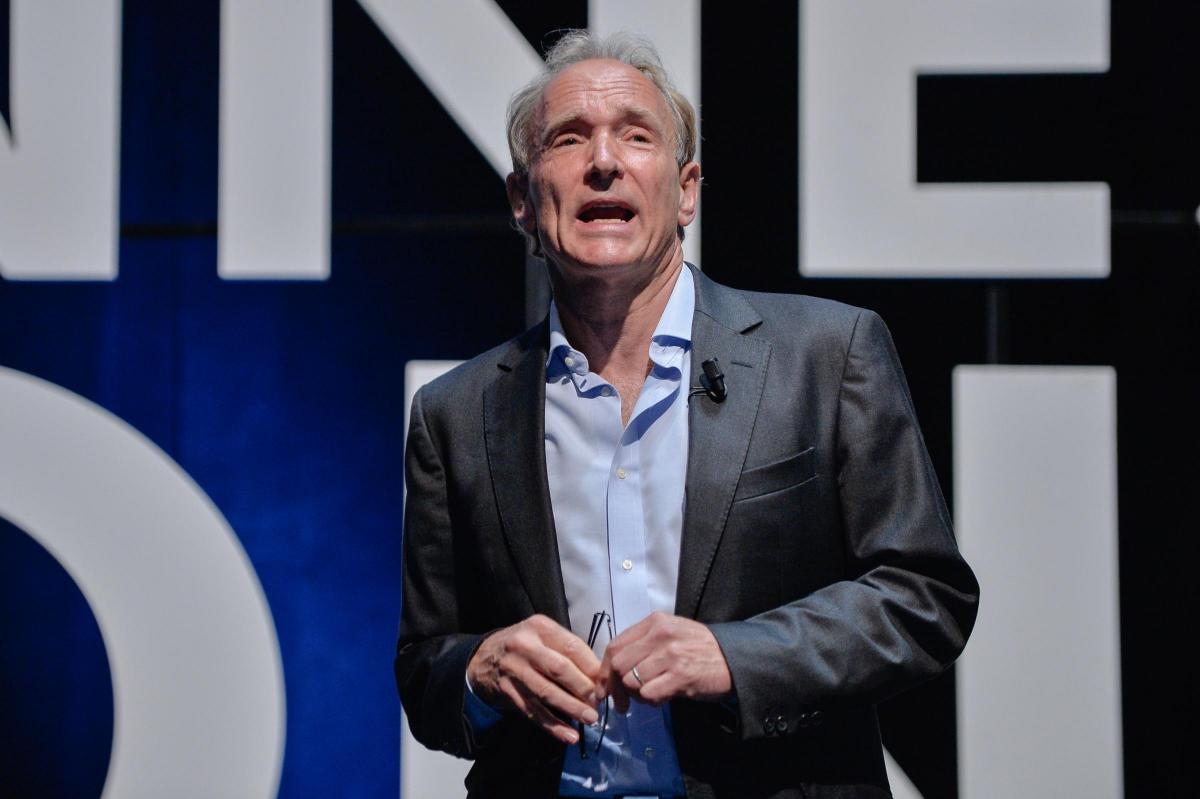

It all began with a child playing with a model railway in his bedroom in London. Tim Berners-Lee was the eldest of four children whose parents were early computer scientists who worked on the first commercially-built computer, the Ferranti Mark 1.

Like many small boys he was interested in trains and played with them on his bedroom floor. Then, possibly through his parents’ interests, he made what he called “electronic gadgets to control trains”, he recalled. “I ended up getting more interested in electronics than trains.”

He went to Oxford, graduating with a first in physics, and, rather than knocking back in the union bars (although he may have done some of that) he knocked up a working computer from an old television he bought in a repair shop and from other bits he found on London’s Tottenham Court Road.

He decided not to do a PhD in physics because no post-graduate he knew enjoyed the work. So, after his degree he worked as an engineer for Plessey and at another company where he helped create typesetting software for printers (doing many of the old jobs, which Rupert Murdoch was quick to cotton on to).

But it was at the research centre CERN – the European Organization for Nuclear Research – outside Geneva, where scientists came from all over the world to use its accelerators, that the lightbulb went on for Berners-Lee. He noticed that these great minds were having difficulty sharing information.

“In those days,” he says “there was different information on different computers. But you had to log on to different computers to get at it. Also, sometimes you had to learn a different programme on each one. Often it was easier just to go and ask people when they were having coffee.”

He saw a way to run it. The internet had been designed in 1973, rolled out in 1983, but remained an obscure platform. It worked by sending packets of information from computer to computer, a packet being like a postcard with a simple address on it. Although millions of computers were connected through the emerging net, Berners-Lee’s genius was to give them the ability to share information, in a universal language, through the emerging technology of hypertext.

In 1989, he wrote his vision for what would become the World Wide Web (WWW) in a document called “Information Management: A Proposal”. His boss at the time wrote “Vague but interesting” on the cover.

But it was interesting enough for Mike Sendall to shove a NeXT computer, an early Steve Jobs product, Berners-Lee’s way and give him time to work out the practicalities.

His colleague who championed the project, and created its first logo, the Belgian computer scientist Robert Cailliau, recalls: “Tim and I try to find a catching name for the system. I was determined that the name should not yet again be taken from Greek mythology … Tim proposed ‘World Wide Web’. I like this very much, except that it is difficult to pronounce in French.”

The difference between the internet and the web is that the former is a network of networks, in Berners-Lee’s description, basically made from computers and cables. On the web the connections are between hypertext links. “The web made the net useful because people are interested in information,” he said, “and don’t want to know about computers and cables.”

Berners-Lee, alone, wrote the three fundamental technologies which remain the foundation of today’s web, and which pop up on browsers. HTML, the formatting language, the URI (more commonly called the URL), the unique address, and HTTP, which retrieves resources from across the web.

He later admitted that the initial pair of slashes (“//”) in a web address were not necessary. “It seemed like a good idea at the time,” was his lighthearted apology. By the end of 1990, Berners-Lee had written the first web page editor/browser and the first web server. However, it was only available to the CERN community. He realised that for it to take off it had to be universally available and free.

“Had the technology been proprietary and in my total control it would probably not have taken off,” he recalled.

He was urged to patent his work but he refused. He and CERN agreed to make the underlying code royalty-free and on August 6, 1991, the World Wide Web was born, without fanfare, with the world’s first website publicly available on the internet. The address was http://info.cern.ch/hypertext/WWW/TheProject.html.

It began to grow markedly before hitting warp speed. In early 1993, in another milestone in the growth of the internet, CERN’s directors declared that the WWW technology was freely available to all forever.

In 1992, Berners-Lee left CERN and his second floor office after the head of the computing and networking department, David Williams, argued that supporting his work was a misallocation of the organisation’s IT resources.

In 2005, Williams was given an honorary professorship at the University of Edinburgh. He died a year later from cancer. Berners-Lee walked out of CERN and straight into MIT, the Massachusetts Institute of Technology, and also set up the World Wide Web Foundation, whose mission statement is fighting for digital equality, “a world where everyone can access the web and use if to improve their lives”. TimBL, as he is known, was knighted in 2004. It is difficult to overstate the man’s importance. If there was a Nobel prize for computer science he would have a clutch.

He took a communications system, the internet, which only the elite could use, and turned it into a mass medium. And he has never taken a penny from his invention. Others, of course, have plundered it, bought newspapers, created business empires, founded car companies, and sent rockets into space.

Berners-Lee intended his web to be a powerful democratic tool, but it has also exacerbated inequality and brought horrors into our houses. He’s now working for, if not exactly a reinvention of his invention, a contract between governments and corporations to protect the web from abuse and to ensure it benefits humanity.

That may be an even more formidable undertaking than his original one.

Why are you making commenting on The Herald only available to subscribers?

It should have been a safe space for informed debate, somewhere for readers to discuss issues around the biggest stories of the day, but all too often the below the line comments on most websites have become bogged down by off-topic discussions and abuse.

heraldscotland.com is tackling this problem by allowing only subscribers to comment.

We are doing this to improve the experience for our loyal readers and we believe it will reduce the ability of trolls and troublemakers, who occasionally find their way onto our site, to abuse our journalists and readers. We also hope it will help the comments section fulfil its promise as a part of Scotland's conversation with itself.

We are lucky at The Herald. We are read by an informed, educated readership who can add their knowledge and insights to our stories.

That is invaluable.

We are making the subscriber-only change to support our valued readers, who tell us they don't want the site cluttered up with irrelevant comments, untruths and abuse.

In the past, the journalist’s job was to collect and distribute information to the audience. Technology means that readers can shape a discussion. We look forward to hearing from you on heraldscotland.com

Comments & Moderation

Readers’ comments: You are personally liable for the content of any comments you upload to this website, so please act responsibly. We do not pre-moderate or monitor readers’ comments appearing on our websites, but we do post-moderate in response to complaints we receive or otherwise when a potential problem comes to our attention. You can make a complaint by using the ‘report this post’ link . We may then apply our discretion under the user terms to amend or delete comments.

Post moderation is undertaken full-time 9am-6pm on weekdays, and on a part-time basis outwith those hours.

Read the rules here